September 9, 2015 Tech Notes: Introducing the Statistical Model of Human Shape and Pose

This post, the first in a series of tech posts, introduces the biggest tool in our toolbox: our statistical model of human shape and pose. This post assumes you’re a programmer, technical designer, modeler/animator, anthropometrist, or human-factors expert. You may want to read up on polygonal meshes and point clouds if those concepts are new to you.

Body Labs has licensed a decade of academic research, which we’re working hard to bring into the world. The core of that research is our Statistical Model of Shape, Pose, and Motion, which was created to allow computers to accurately perceive and quantify human bodies from 3D scans.

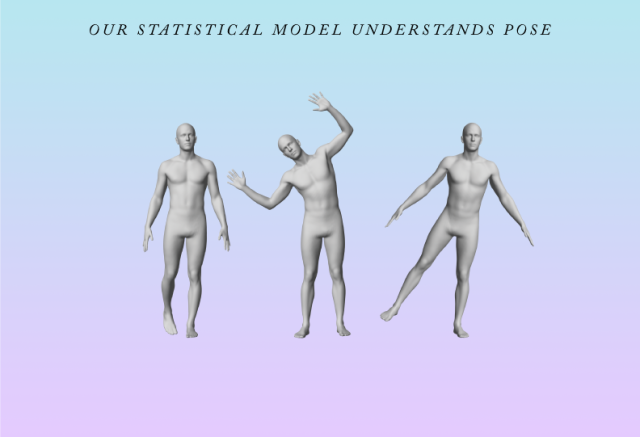

The Statistical Model is proprietary software and data that comprehensively models the surface of the human body. It understands how body parts relate to each other. It knows how a body’s contours change in response to pose and motion.

It understands how each part corresponds from one body to another, regardless of shape or size.

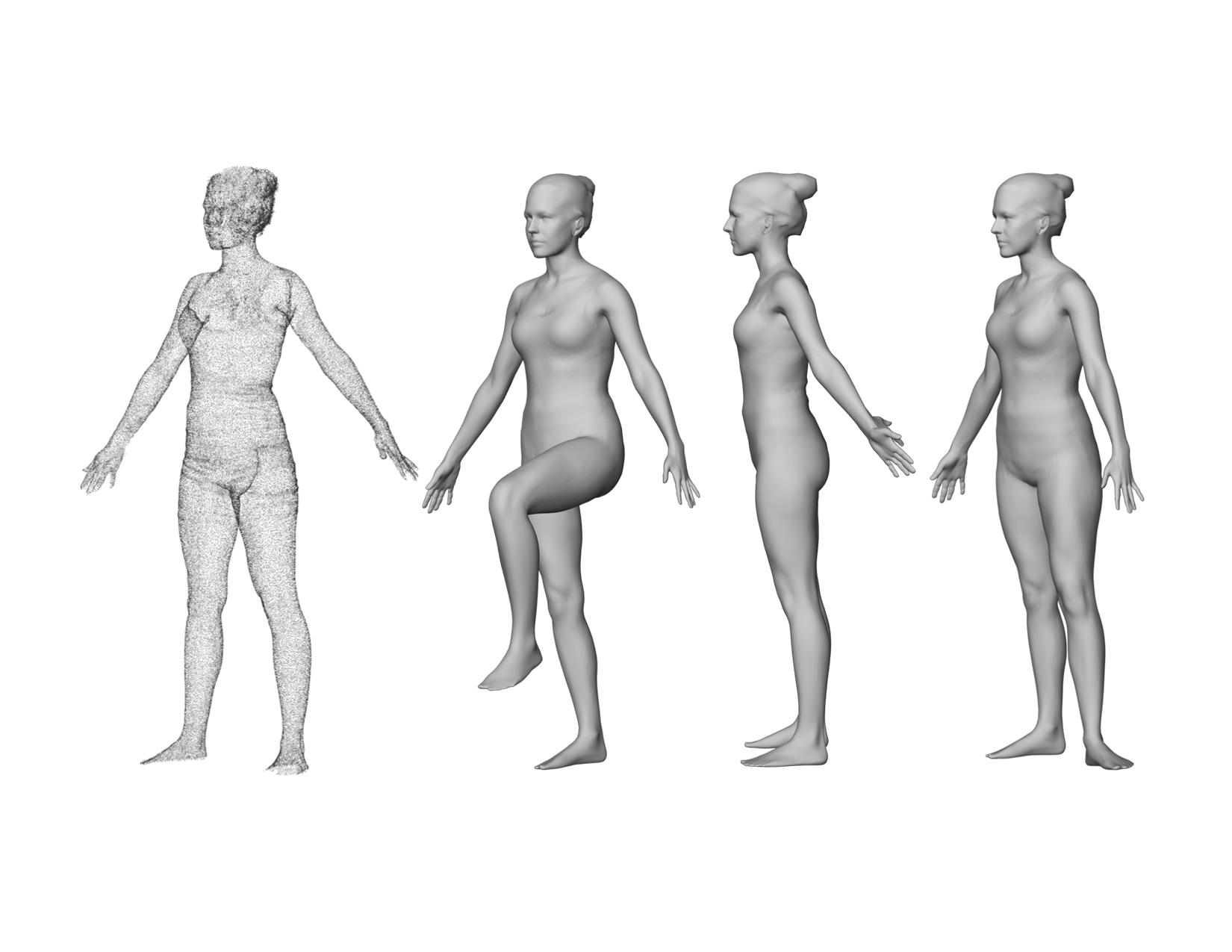

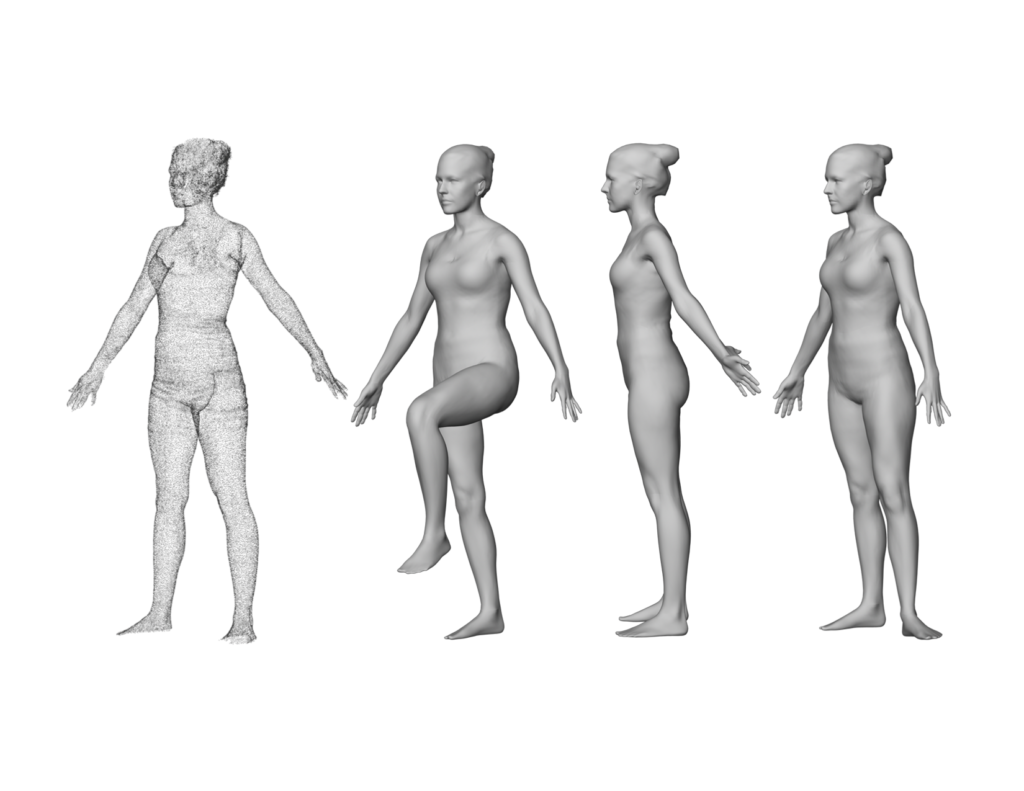

The Model can be used to turn a single 3D scan of a person, which to a computer is a meaningless point cloud, into a smooth and organized polygonal mesh with a known topology. The Statistical Model can operate on this mesh, reposing it into a repertoire of poses. Given a motion-capture sequence, the Statistical Model can animate it with rigorous accuracy.

Leveraging this known topology, we can then output rich quantification data. Not only can we measure the body like a tailor, we can compute measures like the volume of an individual limb.

Such a mesh can be meaningfully and accurately compared to any other mesh generated by the Statistical Model, regardless of how different the bodies, because every one has exactly the same configuration of polygons. We call this rich representation of a person their “Body Model.”

INTERPRETING SCAN DATA AND “HOLE FILLING”

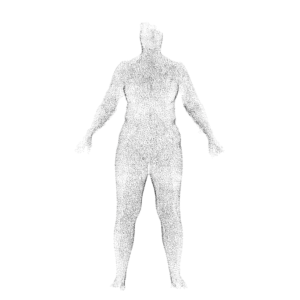

It is necessary to perform this conversion because scans are, frankly, pretty lousy assets to work with. A scanner does not produce a volume with a discrete surface, it produces a point cloud. Even the most expensive, room-sized scanners produce noisy results with missing data, sampling errors, and holes resulting from self-occlusion.

A 3D scan is just a bunch of points.

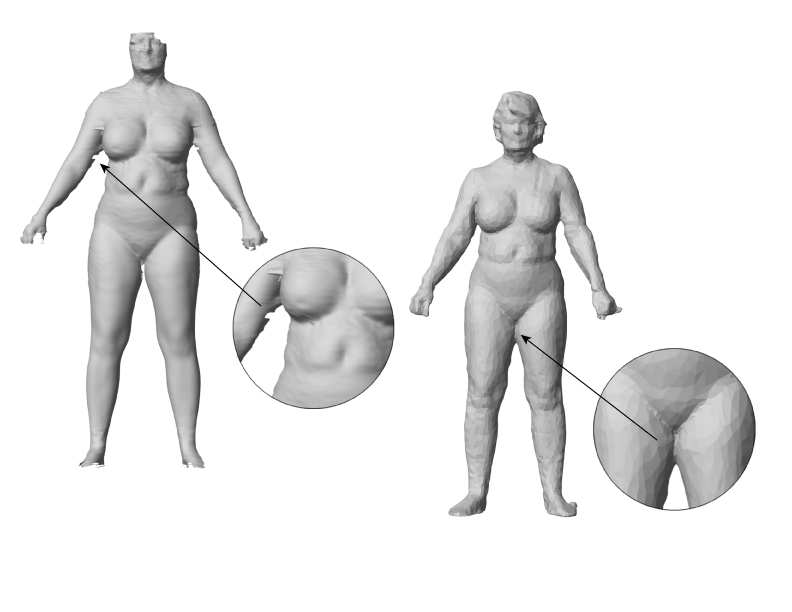

Clearly, obtaining the circumference of a limb from such a point cloud would be guesswork, at best. Many body scanners ship with software to extract measurements, but their output is based on “hole filling,” an unsophisticated heuristic process that turns those point clouds into 3D meshes. Hole filling is not informed by real-world body data. Even the best meshes they produce have poor fidelity with lumpy, approximate surfaces, generally marred by severe deformation in places.

Hole filling doesn’t repair missing data. And thighs aren’t fused together, even if they touch.

As a result, claims of extracting accurate measurements from these results seem dubious.

OUR APPROACH IS WISER

The Statistical Model of Human Shape and Pose is a completely different approach to perceiving the human form, built on a statistical analysis of the human body, as observed from real world data. It can turn 3D scan data of any person into a new mesh, whose structure is clean, smooth, and water-tight. It can also move this mesh into any predefined pose from the Statistical Model’s repertoire. Every mesh it produces is in dense point-to-point correspondence with every other. In other words, point 578 is always on the shoulder, and point 1023 is always on the hip. These points correspond usefully between meshes, even if one was scanned from a 140-pound (63 kg) distance runner and the other was from a 240-pound (109 kg) couch potato.

The Statistical Model can also tell the difference between a person’s thighs touching and their legs being an uninterrupted volume. The hole filling process could be improved a hundredfold and still not understand that distinction.

THE STATISTICAL MODEL’S UNPRECEDENTED SCALE

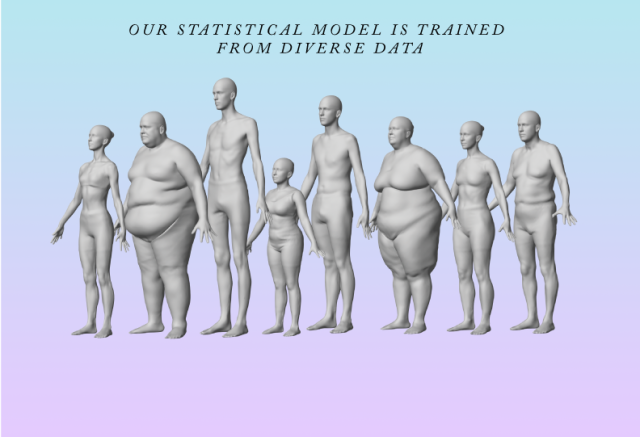

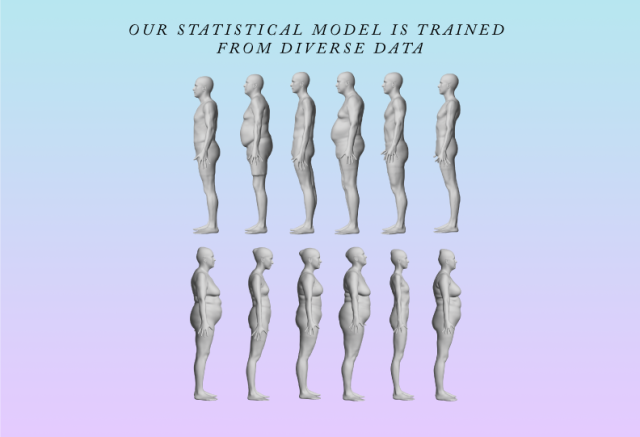

The Statistical Model of Human Shape and Pose is the product of a decade of academic research that began at Brown University and continues today at the Max Planck Institute, under the direction of its originator, Professor Michael Black. It was trained initially on a vast corpus of scans, including CAESAR, and a proprietary corpus of people in various poses collected at Brown and MPI. These tens of thousands of scans have been precisely aligned to a common reference template (more on this in a subsequent post). It is this unprecedented scale that makes the Statistical Model the superior solution for scan interpretation.

The Statistical Model allows us to create a mesh from a set of parameters. These parameters are factored into pose and shape components, meaning the Model can control the shape of a body independently of its pose. Being able to generate a mesh from parameters has an added benefit: Body Labs can generate a statistically probable body mesh from sparse input, such as a few measurements of a person. This ability is visualized through ShapeX, a component of BodyKit, which can build a realistic body from as few as two inputs, getting even more accurate as more data is added. This methodology is called a “generative model.”

The Statistical Model is still in active development at the Max Planck Institute. Over the years it has been augmented and refined with iterative re-training, improving its accuracy and giving it new abilities, such as creating body meshes from motion capture data andanimating a body with life-like breathing. We will continue to build upon and productize these evolving capabilities as they are developed.

WHAT’S NEXT?

We hope that you’ve found this overview of our core technology to be useful and informative. In the following weeks we will continue this series with posts that explain the entire domain of computer perception and modeling of human bodies. Among other things, we will dive into the technical details of:

- How other people have tried to solve this problem

- Our approach: coregistration of shape and pose

- Some of the math behind the model

- How we turn a 3D point cloud into a body model of that person

- Our methods for processing data from motion capture or consumer sensors

We will also turn our attention to applications, explaining a range of industrial, commercial, and artistic uses for our “Body Model.”

P.S. Our team is growing fast! If you’re a developer and these sound like interesting problems,check out our job openings.